We all know that some technical topics become hot, stay hot for some length of time, and then fade away from attention in academia, and the research world more generally. And then there are some topics that stay more stable for extended periods of time, for say one career span of years. This post is my reflection on some technical topics that I have seen go through the different phases of the hype cycle and some that I have seen burn the steady light, and my speculation on causes for these two observations. An important clarification is that in this article, I do not mean “hype” in a negative way, but rather to mean that there is great activity in that area.

I will pick three technical areas to analyze, for no real compelling reason. My observations are made as a non-expert in each of these, as an interested observer and a consumer of the discoveries of these technical areas in my own systems research.

- Artificial intelligence, or its jazzier, more recently arrived cousin, machine learning

- Wireless sensor networks, or its newer and currently hot-but-cooling-off incarnation, Internet of Things

- Software engineering, a staple of software development, but one which is considered by the world-at-large to be the subject of staid textbooks meant to gather dust

Artificial Intelligence

This was supposed to change the world starting in the 1960’s, where there was the promise of the intelligent assistant that can understand what we need (blueberry bagels toasted just so and the right dress for the official reception today) and cater to our whims and fancies. This was hype to rival the biggest of hypes as we know of today, billions of dollars spent, when billion was an awe-inspiring number, and claims of successes in labs. But well hyped discoveries, if they are not replicated widely, under unsupervised settings, tend to deflate and deflate fast. Simplifying wildly, the AI of those days used to be more rigid, rule-based rather than the flexible algorithms that we are familiar with today. The yesteryear ones were able to deal with data that had been formatted just right and from which every ounce of noise had been squeezed out. And under tightly restricted conditions they could show off their skills. For example, the natural language processing system that could only understand instructions to manipulate toy blocks, developed in the early 70’s at MIT. But they failed when the training wheels were taken off them and they were asked to navigate in the wider world, which had a lot more complexity than toy blocks.

So AI became a dirty word. Funding agencies ran away from it, as quickly as they could and academics and researchers in general followed suit. This was to all intents and purposes, a flight by droves, to the right of the knee of the exponential. A few faithfuls stuck around and toiled in the unglamorous basement of the field. There started to be small wins in the wider world, such as, robots doing some tedious or heavy-duty or dangerous tasks, such as descending into the depths of volcanoes. And on the lighter side, there were several things that captured the human imagination. Most prominently there were the Star Wars movies — if we can have an R2D2 or C3PO do such wonderful things on screen, why can’t we have them in the real world. There was also the Robocup soccer tournament where teams of miniature robots squared off against each other.

On the algorithmic side too, there were some breakthroughs, that started at a steady trickle and then gathered pace, that helped bring AI into today’s world of Machine Learning or Machine Intelligence, a super-charged area of activity. One prime example was in deep neural networks, where the back propagation algorithm allowed it to self-adjust to different kinds of data. This coupled with some trends in technology and some killer apps has helped the area get up to warp speed. The trends refer to the growth in the amount of data that everyone — governments, businesses, and even individuals — can collect has grown tremendously and there is value to be mined from such data. The killer apps started off with image recognition and voice recognition for greater degree of automation and has now kept its onward march on to include many other application areas, prominently driverless driving.

So it is that the area of artificial intelligence, now retargeted mostly to deal with large volumes of unstructured data, is enjoying a heyday today after languishing behind its more illustrious peers for all of my graduate school days, and much longer.

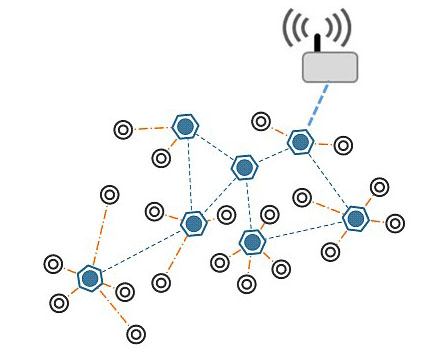

Wireless Sensor Networks (WSNs)

The promise was colossal, embodied in the startling vision of the smart dust – a cubic millimeter device which integrated sensing and computing capabilities. One of the founders of this vision, Kris Pister, forecast boldly back in the late 1990’s:

“In 2010 MEMS sensors will be everywhere, and sensing virtually everything. Scavenging power from sunlight, vibration, thermal gradients, and background RF, sensors motes will be immortal, completely self contained, single chip computers with sensing, communication, and power supply built in. Entirely solid state, and with no natural decay processes, they may well survive the human race. Descendants of dolphins may mine them from arctic ice and marvel at the extinct technology.”

Who could resist the charms of such technology? Certainly not governments, funding agencies, and hence by extension, academic researchers. If you want this build up to lead up to me telling you that this vision has failed, that is not so. Sure it has not succeeded to the grand designs of its founders, but it has permeated into our lives slowly and unobtrusively, almost as unobtrusively as successful technology is supposed to do. So I think nothing of the smart thermostat in our house knowing that we have a bevy of guests and that it should dial down the humidity in the living room. And many people think nothing of conversing with that unobtrusive dot that can order Chinese food for you. And if you happened to have unfortunately named your daughter Alexa, then you have already had the run of the county authorities to get her name changed.

So if I think of how the field evolved, it caught the imagination of the public due in part to the enticing idea that it sought to bridge the divide that has been with us since the dawn of the digital age. The divide is that there is the physical world we interacted with for most of our time and the digital world of laptops and servers. With the sensor networks, we would have the ability to sense the physical world and react intelligently to it, maybe even shape it for our safety or comfort.

So this to me is a necessary ingredient for a topic to take off – a firing of the imagination, a few “killer apps” not just of the inner group of researchers in an area, and not necessarily of the broad public, but of enough people in technology and policy-making bodies, for the technical topic to grow wings and take flight.

The grand vision was ably translated by the leading academic researchers into challenging, but attainable technical problems that larger parts of the community could sink its teeth into. So this led to some really cool core technical developments – how to build networks on the fly using emergent properties, how to deal with vagaries of the shared wireless spectrum, or how to keep network communication going in the face of unpredictable events like mobility. And then there was the “valley of death” where these cool technical solutions were not picked up in enough numbers by the fearless to make commercial success out of them. And so it was that the enthusiasm faded and the field went into the well-known dormant phase.

Today we see the intense activity in the area of Internet of Things (IoT), which can be thought to be an extension of the technical area of WSNs. The WSN developments were taken up by a handful of startups (Crossbow, Memsic, etc.) and then by some known players (Intel, Arduino, etc.) and they kept the news cycle going with improvements, albeit incremental. And then came the whirlwind of IoT with two additional spins — the devices are for consumer use (in addition to the WSN’s industrial use) and there is the possibility of taking actions in response to sensed and analyzed events.

So in hindsight, the area is witnessing the second hype cycle thanks to several circumstances, which could not all have been meticulously planned.

Software Engineering

Our lives run on software and it is only fit that we take development of software seriously, i.e., as a rigorous engineering enterprise. Hence, software engineering.

This is an area that seems to have enormous resonance in the tech industry because companies’ fortunes are so intricately tied to the strength of their software, both software in external products and software used internally. We teach software engineering in our curriculum, aware of the tension that the real large-sized software design and development exercises cannot be done within the confines of the classroom due to several reasons — it will take much more time than we can spend in the classroom, we do not have access to some “real world” software, and it is hard to factor in real end user requirements in such exercises. So some of our industrial colleagues carp that they have to teach all their new recruits how to engineer software from scratch, while another subset, decidedly a minority, appreciate when our students graduate knowing the basics of some bread-and-butter skills, like regression testing and software version control.

On the research side, this area seems to have run at a steadier pace than the two areas we discussed above. As software gets more complex — we have heard it so many times that this is an axiom by now — there is a need for research methods to deal with such software, such as, how to automatically figure out where the bugs lie, how to incrementally improve software to meet new user requirements, etc. The software ecosystem seems to change with enough speed and with enough stride in each step that the software engineering field has had interesting problems to work on. I have not heard from my academic colleagues in this area that there has ever been a sudden influx of pots of gold descending on their field, but also that the research interest has been on a slow, if unspectacular rise. You can for example look at this trend in the number of paper submissions to the two top conferences (ICSE and ASE).

One development that is crucial to the continued relevance of any research field is real-world data — to motivate the solutions as well as to evaluate them. The software engineering field benefits enormously from the growth in open source software and importantly, the open source bug repositories and discussion groups. These bring in real-world data into the field and serve as concrete instances of impact of the field.

So it is that the area of software engineering has gone through occasional small peaks in the hype cycle — think back to the unveiling of Java as the write-once-run-everywhere programming environment, or Android taking over as the default operating system of the mobile world. But for the most part, it has run a steady race.

Technical topic areas in the academic research world go through hype cycles, some experiencing a single cycle and some multiple. The astute amongst us can align themselves well with the peaks and troughs, extracting some ground truths and learning some transferable skills in the process. The gifted amongst us can lead the charge on one and the truly superhumans can do this with multiple topic areas, when they take off.