When we design algorithms or implement them into computing systems, we rarely think about the policies that they instantiate. We rarely think beyond trite generalizations, of the countless users who may be at the receiving end of the vagaries of our algorithms. If our project becomes immensely successful, then we will count our users in tens of thousands or more. And what about corner cases of our systems that will inconvenience, irritate, annoy, or even infuriate these users. I was recently one such user at the receiving end of an airline standby list ordering algorithm.

Perhaps a perfectly well-meaning software engineer who implemented an algorithm thinking of all the criteria that seemed reasonable to her. But little did she realize that when a snowstorm hits the midwest, the temperature wind chill hits so low that the airplane deicing machine freezes over, and all this at the peak of the winter holiday travel season, then this well-meaning algorithm will drive so many up the wall. This made me think of unintended consequences of our software systems and this article is a call for us to ponder over them and take some proactive steps.

We as Computer Scientists or Engineers often do not think of the ramifications of the technology that we develop. We are, understandably, focused on developing great technology. It gives us that dopamine kick when our elegantly designed software runs and passes all the unit tests, all the integration tests, even all the system tests with flying colors. We are often blind to the end users who are going to use our software, sometime for quite important purposes. Recently I got to introspect if some good can come out of a greater awareness of the effect of our software on the broader user base.

I was flying out to India in December from the bone chilling midwest (of the US). Airport displays were alight with depressing news of flight delays and cancellations with the perfect storm of a winter storm and peak holiday travel. After several re-bookings, I was set to fly out of Houston for the international leg of the flight. So I had to take domestic flights to Houston and had the not-uncommon misfortune of seeing myself bumped to the standby list. That’s when I experienced the vagaries of black box algorithms. My standby list order of 1 would be pushed down to a hopeless 6 and then tantalizingly rise up to a 3. All the while my chances of finding my way to family, some 8,000 miles away, rode on the vagaries of this algorithm.

There are clearly matters of much higher importance where black box algorithms are making decisions. Whether someone will get a loan for buying a house is dependent on the opaque policy instantiated in some black box algorithm. Ditto whether someone’s parole application will get approved, some startup entrepreneur will get capital for his venture, or someone on an organ donation waitlist will get his life-saving treatment.

So we take comfort in the illusion that we are only the developers of the software and someone else, an invisible generic set of policy makers, will make the tricky decisions for us. I believe it is wise, and even feasible, for us to step out of this illusion. We can stretch our horizon, even if just in inches, to think of the dual, or N-way, uses of our software and take steps to amplify the positive outcomes and reduce the likelihood of the undesirable ones.

Being proactive is well within our reach as academics. We can socialize this idea among our technical communities, write perspective articles to illuminate the various effects of the technologies of the day, and engage with our funding agencies so that they think about broader impacts of our projects while making funding decisions. On a prosaic level, we can encourage at our conferences technical tracks where such discussions can take center stage.

It is arguably harder to influence policy in the commercial sector, but I believe, still feasible. One can engage with the high order decision makers in the organization to make them understand the consequences, intended or unintended, of the technology products that the company releases. And then, when one rises to these levels of pivotal decision making, one can inculcate these principles in the decision-making process. Common across the two sectors, we can whittle away our constricting perception of an inseparable barrier between the technology developers and the policy makers. Rather, one can engage with the policy makers — in some instances such forums already exist, such as the US Department Embassy Science Fellows Program — and in other cases, one will have to charge ahead and create such opportunities. Sometimes, as in my experience, it starts with an easy conversation at a coffee table.

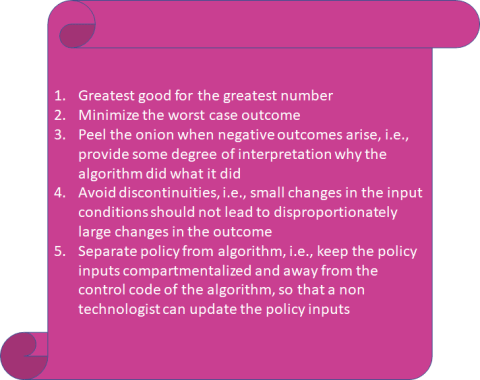

To distill a lot of the background thinking that must go into the decision making for our algorithms, one can aim for the following outcomes that will apply to a large swath of our software systems:

To sum up, software systems have become a central part of our lives and they are increasingly making critical decisions in our lives. Too often the decisions are opaque to the owners of the systems, let alone the end users. There has been noteworthy progress in some fields of computing to make this less so, such as through a fast increasing volley of work in interpretable Machine Learning. I believe we need to accelerate this process and spread it out much more widely to all manners of software systems. In this article, I lay out some of the desirable outcomes we need to aim for and some modest but practical first steps. We aim for the Eden where our software systems will cause less and less of forehead slapping frustration for the end users due to opaque and unintended consequences.