In a previous post, I discussed, through a dystopian scenario, how autonomous systems can reduce our lives to one of hapless destiny. And in the last post, I discussed some design and development principles that we as technologists can follow that can help us avoid plunging into such dystopian scenarios. In this article, I will hypothesize about some policy actions that we can take to beat the autocracy of autonomous systems. This is knowing well how wading into policy issues is anathema to most of us technologists. But there are worse things that we can do with our time and talents.

- What some feared future scenarios are

- What we can do technologically to prevent such scenarios

- What we can do policy-wise to prevent such scenarios

Some Policy Guiding Principles

As technologists, we are notoriously averse to participating in policy discussions, even when we believe strongly that policy should be informed by good science and engineering. And even when we believe that we do that good science and engineering. The reasons are fairly self evident—our reward system in academia does not factor in policy victories and in industrial settings, there is usually a stern line of separation between those who build technology and those who argue for its uses. But I argue that especially in the area of autonomous systems, it makes urgent sense for us to inform policy makers by engaging in some policy debates. Sure this will involve stepping outside the comfortable hygienic space of cold reason and unarguable formulae. But without this, as autonomous systems get adopted at break neck speed, the specter of its misuse becomes increasingly likely. So here are three things that I believe we can do without being tagged with the label of “Thou doth protest too much“.

Engage in public forums to inform others, importantly policy makers, of uses and misuses of your technology

With policy makers when one tries to engage with them, we are often surprised by the fact that arguments do not win the day based on pure scientific or engineering merit. There are broader contexts at play, which we are often unaware of because it is not part of our day jobs. The discussions often reprise Mark Twain’s saying.

“What gets us into trouble is not what we don't know. It's what we know for sure that just ain't so.”

The policy makers and their retinue, such as, congressional staff members, are trying to drink from a firehose of information. It is wise for us to not add to that firehose, but rather enable them to glean the key points, the actionable insights, and unbiased conclusions. As academics we can speak our mind free from commercial pressures and so our unbiased recommendations, if delivered right, carry a lot of weight.

It also amplifies the effect of our recommendation if we tie it to issues that resonate with the policy makers, e.g., appeals to the constituents of the elected official. Does the electorate care about the security of autonomous systems? If there has recently been a privacy breach of a database being collected by an autonomous system, sure.

Applaud victories of your technology and flag misuses

When technology helps in achieving a newsworthy goal, the mass media may pay little attention to the technology behind it. For example, when Project Loon provides internet connectivity to people in remote parts of Puerto Rico after a hurricane, we can use this opportunity to highlight the research in machine learning, wireless communication, and balloon navigation that went into it. This can be highlighted through blog posts on forums read by people outside of our technology circles (such as, Medium and New York Times blog), or through university or technical community news releases. The other side of the coin is when we see misuse of a technology that we understand deeply, it makes sense to stand up and explain the fundamental reason behind the misuse and how it can be mitigated. For example, as society becomes inured to news of data breaches whereby personal information is released, this may be a useful opportunity for security researchers to talk of the uses of two factor authentication. The cost of deploying it is so much smaller than the psychological cost, and increasingly, dollar cost of a large-scale data breach.

Create closed-loop feedback and change between real-world use and technology

Technology at the leading edge that we develop is often a work in progress. Once we put out our open source software and it becomes wildly popular because it fills a need, of a large enough number of people, in some part of the world, our work is not done. As we see uses and misuses of the technology, we should revise it, such as through software releases. This is of course not as exciting as the thrill of putting out the novel software package in the first place, but is the right thing to do. And also something that is necessary if we want our technology to continue to be embraced.

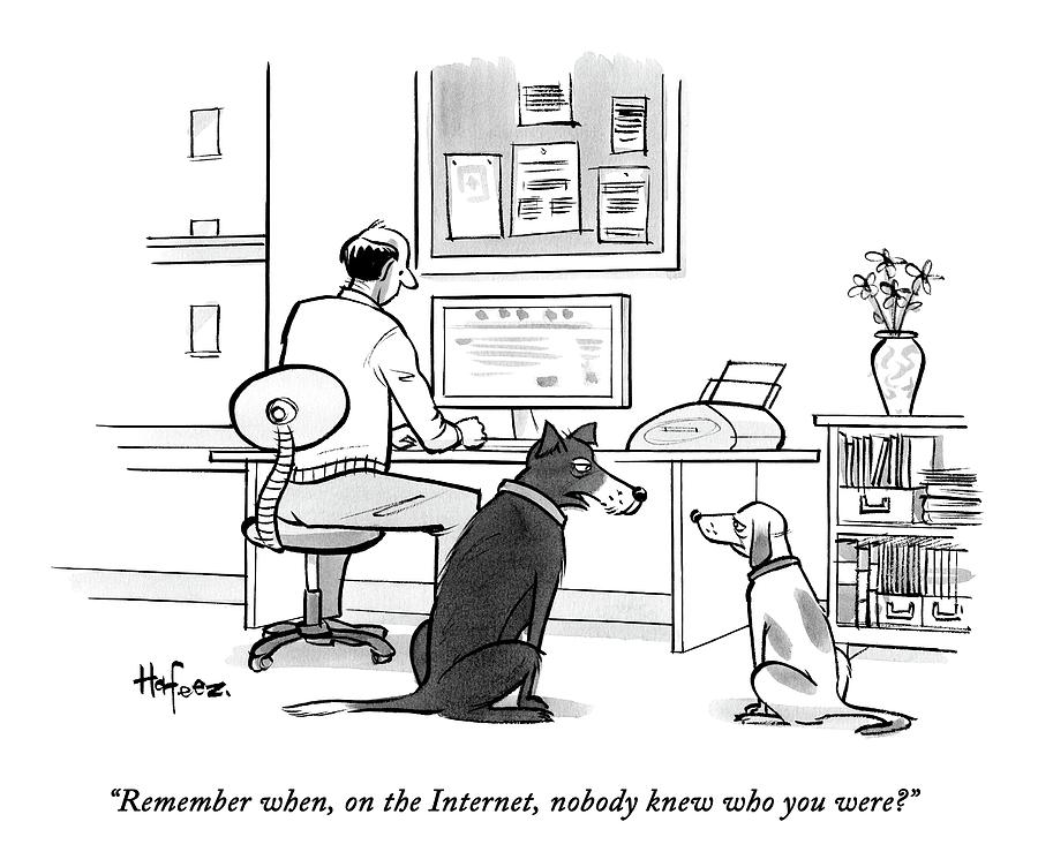

A good case in point is the Tor network, the software package for enabling anonymous communication on the internet. From its inception in the 1990s it has been at the forefront of battles to increase internet freedom, freeing people from censorship, tracking, and surveillance. Started with funding from the US Navy and development by researchers employed by the US Navy, it has gone through 19 releases in the last 8 years and every year the top security conferences publish a handful of papers on attacks and resultant improvements to Tor.

So, to sum up, as technology developers, if we nurse the ideal of our technology changing lives, it is needed for us to engage in shaping policy. We do not have to go the full distance on this, and thus we can stick to our day jobs of doing good science and engineering. But here even half measures will be much better than status quo. First, we can inform and educate our policy makers through articles and civil discussions, where we lay out the powers and the limits of the technology. Second, we can publicly and loudly applaud when some technology that we care about achieves a noteworthy victory in the public sphere. Likewise, we can publicly and loudly decry a misuse of our technology. Finally, we can adopt the philosophy that our labor-of-love technology is not a finished story with its first release. Rather, it needs to be refined with inputs from its real-world deployment. With these ingredients in place, we would have taken steps toward technology for greater good, and out of the hand wringing that we typically do when we see technology faltering in its transition outside of the academe.